Categories

Archives

IPTC Managing Director Brendan Quinn presented on IPTC’s work in content provenance and authenticity at the Reimagining DAM conference in Munich, Germany, last week.

The conference is organised by Digital Asset Management software vendor (and IPTC member) Fotoware for its customers and others in the industry who are interested in their work.

As Fotoware’s Chief Product and Technology Officer Janniche Moe said in her introduction, we are now in a world where we can’t answer the most basic questions about content: “Where did this come from? Can we use it? Can we trust it?”

C2PA, and the IPTC’s work to make it workable for the media industry, addresses that problem.

Quinn’s presentation focused on the threat of fake news and misinformation to the media industry, how C2PA can solve the problem, and how IPTC is helping media companies to implement C2PA Content Credentials in a simple way by “stamping” content with their publisher signature.

To learn more on this topic, attend the IPTC Spring Meeting and the IPTC Media Provenance Summit in Toronto, Canada in April 2026.

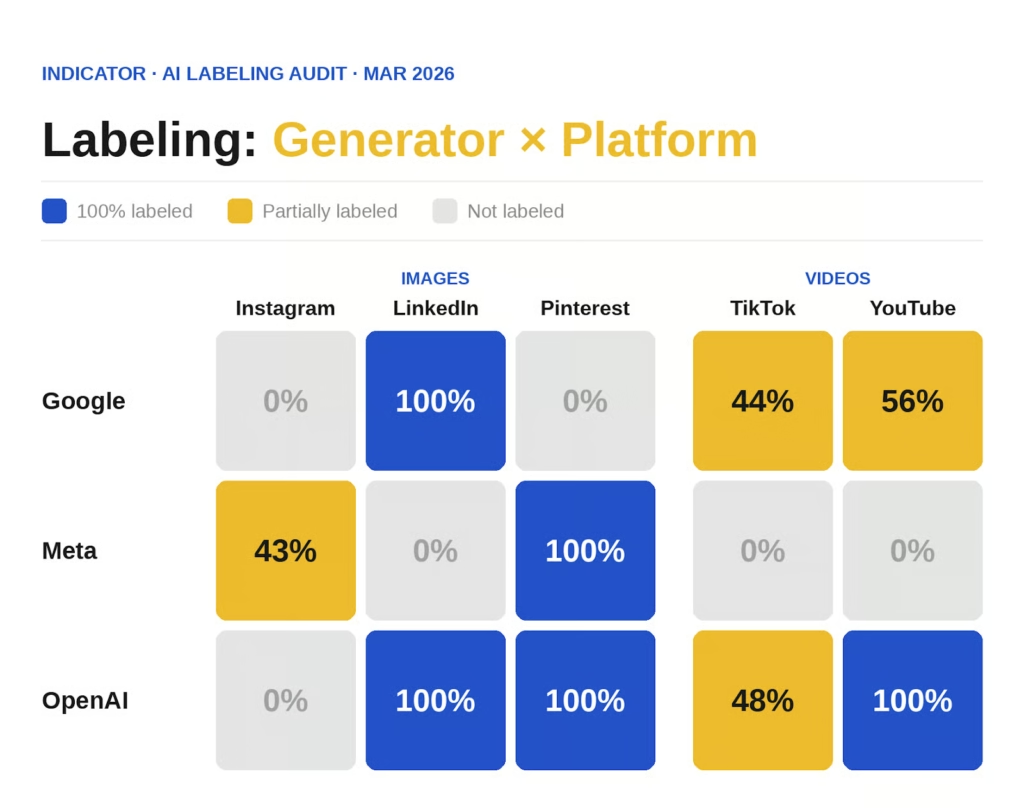

Indicator, an online publication focusing on studying and exposing digital deception and manipulation, has published an updated analysis of how Generative AI tools output are shown on social media platforms.

The study, an update to a previous study conducted in October, shows some good news: for example, all image and video content created by OpenAI models was correctly labeled as AI-generated by LinkedIn, Pinterest, and YouTube.

However, some significant gaps remain.

For example, some images from Meta AI were recognised as AI-generated by Instagram, but they were not recognised by LinkedIn, TikTok or YouTube. Meta AI uses IPTC’s Digital Source Type property in the media file’s XMP header (the typical way to use IPTC Photo Metadata) to signal AI-generated content, so this means that the IPTC DigitalSourceType property is not being examined by these platforms.

OpenAI and Google Gemini both use C2PA metadata to assert digital source type (also using IPTC’s Digital Source Type vocabulary, but this time embedded in a C2PA manifest). However Pinterest, for example, only picked up OpenAI’s version of the C2PA metadata, but not Google’s. Pinterest did surface Meta AI’s content via the IPTC Digital Source Type tag, and was equal-best overall, tying with LinkedIn who recognised all content from Google and OpenAI but not from Meta.

IPTC Managing Director Brendan Quinn was quoted in the article as saying “Tech platforms have the talent to implement C2PA tomorrow; they simply need the will to prioritize it.”

Why not both?

On the creation side, OpenAI and Google Gemini declare AI-generated content using IPTC’s Digital Source Type vocabulary embedded in a C2PA manifest. (Unfortunately they each use different versions of the C2PA spec, so results are not consistent across all social media platforms, even those that read C2PA metadata.)

Meta AI uses the same vocabulary, embedded in the Digital Source type property in “regular” IPTC embedded photo metadata.

We would recommend that all AI engines uses both techniques, to give their AI disclosure information the greatest chance of being surfaced by all platforms.

On the consumption side, it seems that Instagram examines the IPTC DigitalSourceType property but not C2PA. Conversely, LinkedIn examines C2PA but not IPTC. Pinterest seems to be the only platform that looks at both, but it’s implementation doesn’t analyse the more complex C2PA metadata assertions used by Google, meaning that it only surfaces OpenAI’s simpler implementation.

“Whether they want to or not,” platforms are “just going to have to deal with this”

The article noted that looming legislation from California and other jurisdictions would force platforms to implement AI surfacing properly, but in the meantime there is a risk: Maurice Jakesch, assistant professor of computational social science at Bauhaus-University in Weimar, is reported as saying that “an inconsistent and incomplete labeling setup may have unexpected consequences on online trust.”

Bruce MacCormack of Neural Transform, who has worked with CBC/Radio-Canada on establishing C2PA, joined a panel in Geneva launching an initiative from the World Intellectual Property Organisation: the Artificial Intelligence Infrastructure Initiative (AIII).

Bruce MacCormack of Neural Transform, who has worked with CBC/Radio-Canada on establishing C2PA, joined a panel in Geneva launching an initiative from the World Intellectual Property Organisation: the Artificial Intelligence Infrastructure Initiative (AIII).

Pronounced “A triple I”, the initiative seeks to bring together representatives from creators and rights holders across industries to work on a common approach to ensuring that creatives are compensated for AI use of their content.

Bruce MacCormack is Chair of IPTC’s Media Provenance Committee, which is working on bringing C2PA technology to the news media industry. Bruce spoke about how his experience at CBC in establishing C2PA can help to bring the industry together to create a set of policies and technical solutions to address an industry-wide problem.

A featured speaker was award-winning musical artist Imogen Heap, who spoke about the importance of metadata for the music industry and creatives generally and of her Auracles project bringing artist data together . Asked if she was optimistic about the future of creative industries, Heap said that she had to be hopeful, because the livelihoods so so many creatives across so many art forms depended on it.

Other speakers at the event included Chris Horton of Universal Music Group, Mark Isherwood of music interoperability standard DDEX, Ana da Motta, Senior Manager Digital Affairs & Artificial Intelligence for Amazon Web Services, Alessandra Sala, Senior Director of Artificial Intelligence and Data Science, Shutterstock, along with Ulrike Till, Director and Kenichiro Natsume, Assistant Director General of WIPO.

AI in the Newsroom: A High-Level Panel

Brendan Quinn, Managing Director of the IPTC, joined a high-level panel to explore the transformative impact of AI on the global media landscape. He was joined on stage by Peter Kropsch, CEO of Deutsche Presse-Agentur (dpa) and Earl J. Wilkinson, CEO of the International News Media Association (INMA). The session was moderated by Najlaa Habriri, Senior Editor and Political Commentator at Asharq Al-Awsat, a Saudi newspaper based in London.

Key Discussion Points

The wide-ranging conversation addressed how news organisations can navigate the “smart media” era:

- Content Control: Leveraging technical standards to help publishers retain rights and control over their output.

- Editorial Integrity: Integrating AI into workflows while safeguarding accuracy, accountability, and editorial responsibility.

- The Modern Newsroom: How hybrid roles, blending editorial, data, and audience expertise, are reshaping recruitment and staff development.

International representation

The forum featured many speakers from the international media industry, including Ben Smith (Semafor), Tony Gallagher (The Times), Karen Elliott House (formerly of The Wall Street Journal), Julie Pace (Associated Press) and Vincent Peyrègne (formerly of WAN-IFRA), alongside prominent local media leaders from across the Middle East.

IPTC’s Managing Director Brendan Quinn spoke at the event Breaking the News? Global perspectives on the future of journalism in the age of AI in Berlin on Wednesday 28th January, an event organised by Deutsche Welle Akademie, an arm of IPTC member Deutsche Welle.

Barbara Massing, Director General, Deutsche Welle gave the opening presentation where she emphasised that all news organisations depended on earning, and keeping, the trust of their audience: “Trust is not a given. It must be earned. Every single day.”

Reem Alabali Radovan, Germany’s Federal Minister for Economic Cooperation and Development, gave her thoughts on the importance of media companies to global democracy.

Courtney Radsch of the the Open Markets Institute gave a keynote presentation where she encouraged media organisations to hold strong against the narrative pushed by AI vendors, asking them not to give in to the jargon of the industry. AI tools do not have “hallucinations”, they make “fabrications.”

IPTC’s Brendan Quinn spoke on a panel on the relationship between AI vendors and publishers, along with representatives from Open AI and Cloudflare (other AI companies were invited to attend but declined the invitation). Quinn spoke about the IPTC’s AI opt-out guidelines and discussed the complicated landscape and the lack of progress in the IETF AI Preferences Working Group, as documented in a recent Open Future Foundation paper.

A report from Deutsche Welle on the event summarised the following takeaways:

- Collaboration and solidarity: Media companies only have power together

- Tech companies need to be regulated – they won’t self-regulate

- Media need a clear understanding of tech business models

- Media can and should use public-interest AI tools

- We need a better dialogue with big tech – demonisation won’t help

- Journalism must be treated as critical infrastructure, not just an industry

Thanks to Deutsche Welle Akademie for hosting the event and inviting Brendan Quinn to speak.

The survey describes various technologies which could be used by content owners and rights holders to express opt-in or opt-out information regarding whether rights holders allow AI engines to train on media content. It asked our thoughts on how widely they have been adopted and how suitable they would be to be adopted as a mechanism for expressing machine-readable opt-out preferences.

This is the first step in a multi-stage process, which will culminate in the publication by the European Commission of the final list of generally agreed TDM opt-out protocols.

We feel that the IPTC is well-suited to participate in this work for several reasons:

- IPTC has created one such mechanism (the Data Mining property of the IPTC Photo Metadata Standard, created in conjunction with the PLUS Coalition)

- IPTC has been involved in the creation of other technologies in this area as such the W3C Community’s Text and Data Mining Reservation Protocol (TDMRep), C2PA, and the IETF’s work in the AIPrefs Working Group

- IPTC has published a guidance document for publishers on best practices for implementing AI opt-out technologies

We look forward to continuing work with the European Commission, and others, on this subject.

IPTC member France Télévisions has started signing its daily news broadcasts using C2PA and FranceTV’s C2PA certificate, which is on the IPTC Origin Verified News Publisher List.

This makes France TV the first news provider in the world to routinely sign its daily news output with a C2PA certificate.

The work won FranceTV the EBU Technology & Innovation Award this year.

IPTC has assisted FranceTV in this work and continues to work with FranceTV along with other broadcasters and publishers on signing their content using the C2PA specification.

A specific page Retrouvez nos JT certifiés (“Find our certified news programmes”) is available on FranceTV’s site franceinfo.fr, where the latest 1pm and 8pm news programmes are published containing a C2PA signature. The page Pour vous informer en toute sécurité contains more information (in French) about FranceTV’s work on transparency and authenticity.

We congratulate FranceTV for their work and look forward to further collaboration in 2026 and beyond.

The Media Provenance Summit brought together leading experts, journalists and technologists from across the globe to Mount Fløyen in Bergen, Norway, to address some of the most pressing challenges facing news media today.

Hosted by Media Cluster Norway, and organised together with the BBC, the EBU and IPTC, the full-day summit on September 23 convened participants from major news organisations, technology providers and international standards bodies to advance the implementation of the C2PA content provenance standard, also known as Content Credentials, in real-world newsroom workflows. The ultimate aim is to strengthen the signal of authentic news media content in a time where it is challenged by generative AI.

“We need to work together to tackle the big problems that the news media industry is facing, and we are very grateful for everyone who came together here in Bergen to work on solutions. I believe we made important progress,” said Helge O. Svela, CEO of Media Cluster Norway.

The program focused on three critical questions:

- How to preserve C2PA information throughout editorial workflows when not all tools yet support the technology.

- When to sign content as it moves through the workflow at device level, organisational level, or both.

- How to handle confidentiality and privacy issues, including the protection of sources and sensitive material.

“We were very happy to see a focus on real solutions, with some great ideas and tangible next steps,” said IPTC’s Managing Director, Brendan Quinn. “With participants from across the media ecosystem, it was exciting to see vendors, publishers, broadcasters and service providers working together to address issues in practically applying C2PA to media

workflows in today’s newsrooms.”

Speakers included Charlie Halford (BBC), Andy Parsons (CAI/Adobe), François-Xavier Marit (AFP), Kenneth Warmuth (WDR), Lucille Verbaere (EBU), Marcos Armstrong and Sébastien Testeau (CBC/Radio-Canada), and Mohamed Badr Taddist (EBU).

“The BBC welcomes this focus on protecting access to trustworthy news. We are proud to have been founder members of the media provenance work carried out under the auspices of C2PA and we are delighted to see it moving forward with such strong industry support,” said Laura Ellis, Head of Technology Forecasting at BBC Research.

Participants travelled to participate in the summit from as far away as Japan, Australia, the US and Canada.

“We’re pleased to collectively have taken a few hurdles on the way to enabling a broader adoption of Content Provenance and Authenticity”, said Hans Hoffmann, Deputy Director at EBU Technology and Innovation Department. “The definition of common practices for signing content in workflows, retrieving provenance information thanks to soft binding, and better safeguards for the privacy of sources address important challenges. Public service media are committed to fight disinformation and improve transparency, and EBU members were well represented in Bergen. The broad participation from across the industry and globe

smooths the path towards adoption. Thanks to Media Cluster Norway for hosting the event!”

The summit emphasised moving from problem analysis to solution exploration. Through structured sessions, participants defined key blockers, sketched practical solutions and developed action plans aimed at strengthening trust in digital media worldwide.

About the Summit

The Media Provenance Summit was organised jointly by Media Cluster Norway, the EBU, the BBC and IPTC, and made possible with the support of Agenda Vestlandet.

For more information, please contact: helge@medieklyngen.no

The IPTC has joined the BBC (UK), YLE (Finland), RTÉ (Ireland), ITV (UK), ITN (UK), EBU (Europe), AP (USA/Global), Comcast (USA/Global), ASBU (Africa and Middle East), Channel 4 (UK) and the IET (UK) as a “champion” in the Stamping Your Content project, run by the IBC Accelerator as part of this year’s IBC Conference in Amsterdam.

The IPTC has joined the BBC (UK), YLE (Finland), RTÉ (Ireland), ITV (UK), ITN (UK), EBU (Europe), AP (USA/Global), Comcast (USA/Global), ASBU (Africa and Middle East), Channel 4 (UK) and the IET (UK) as a “champion” in the Stamping Your Content project, run by the IBC Accelerator as part of this year’s IBC Conference in Amsterdam.

These “Champions” represent the content creator side of the equation. The project also includes “participants” from the vendor and integrator community: CastLabs, TCS, Videntifier, Media Cluster Norway, Open Origins, Sony, Google Cloud and Trufo.

This project aims to develop open-source tools that enable organisations to integrate Content Credentials (C2PA) into their workflows, allowing them to sign and verify media provenance. As interest in authenticating digital content grows, broadcasters and news organisations require practical solutions to assert source integrity and publisher credibility. However, implementing Content Credentials remains complex, creating barriers to adoption. This project seeks to lower the entry threshold, making it easier for organisations to embed provenance metadata at the point of publication and verify credentials on digital platforms.

The initiative has created a proof-of-concept open source ‘stamping’ tool that links to a company’s authorisation certificate, inserting C2PA metadata into video content at the time of publishing. Additionally, a complementary open-source plug-in is being developed to decode and verify these credentials, ensuring compliance with C2PA standards. By providing these tools, the project enables media organisations to assert content authenticity, helping to combat misinformation and reinforce trust in digital media.

This work builds upon the “Designing Your Weapons in the Fight Against Disinformation” initiative at last year’s IBC Accelerator, which mapped the landscape of digital misinformation. The current phase focuses on practical implementation, ensuring that organisations can start integrating authentication measures in real-world workflows. By fostering an open and standardised approach, the project supports the broader media ecosystem in adopting content provenance solutions that enhance transparency and trustworthiness.

Attend the project’s panel presentation session at the International Broadcasting Convention, IBC2025 in Amsterdam on Monday, Sept 15 at 09:45 – 10:45.

The speakers on the panel on Monday September 15 are all from IPTC member organisations:

- Henrik Cox, Solutions Architect – OpenOrigins

- Judy Parnall, Principal Technologist, BBC Research & Development – BBC

- Mohamed Badr Taddist, Cybersecurity Master graduate, content provenance and authenticity – European Broadcasting Union (EBU)

- Tim Forrest, Head of Content Distribution and Commercial Innovation – ITN

See more detail on the IBC Show site.

Many of the participating organisations are also IPTC members, so the work started in the project will continue after IBC through the IPTC Media Provenance Committee and its Working Groups.

We are already planning to carry this work forward at the next Media Provenance Summit which will be held later in September in Bergen, Norway.

Google has announced the launch of its latest phone in the Pixel series, including support for IPTC Digital Source Type in its industry-leading C2PA implementation.

Many existing C2PA implementations focus on signalling AI-generated content, adding the IPTC Digital Source Type of “Generated by AI” to content that has been created by a trained model.

Google’s implementation in the new Pixel 10 phone differs by adding a Digital Source Type to every image created using the phone, using the “computational capture” Digital Source Type to denote photos taken by the phone’s camera. In addition, images edited using the phone’s AI manipulation tools show the “Edited using Generative AI” value in the Digital Source Type field.

Note that the Digital Source Type information is added using the “C2PA Actions” assertion in the C2PA manifest; unfortunately it is not yet added to the regular IPTC metadata section in the XMP metadata packet. So it can only be read by C2PA-compatible tools.

Background: what is “Computational Capture”?

The IPTC added Computational Capture as a new term in the Digital Source Type vocabulary in September 2024. It represents a “digital capture” that does involve some extra work using an algorithm, as opposed to simply recording the encoded sample hitting the phone sensor, as with simple digital cameras.

For example, a modern smartphone doesn’t simply take one photo when you press the shutter button. Usually the phone captures several images from the phone sensor using different exposure levels and then an algorithm merges them together to create a visually improved image.

This of course is very different from a photo that was created by AI or even one that was edited by AI at a human’s instruction, so we wanted to be able to capture this use case. Therefore we introduced the term “computational capture”.

For more information and examples, see the Digital Source Type guidance in the IPTC Photo Metadata User Guide.

Categories

Archives

- March 2026

- February 2026

- January 2026

- December 2025

- November 2025

- October 2025

- September 2025

- August 2025

- July 2025

- June 2025

- May 2025

- April 2025

- March 2025

- February 2025

- January 2025

- December 2024

- November 2024

- October 2024

- September 2024

- August 2024

- July 2024

- June 2024

- May 2024

- April 2024

- March 2024

- February 2024

- December 2023

- November 2023

- October 2023

- September 2023

- August 2023

- July 2023

- June 2023

- May 2023

- March 2023

- February 2023

- January 2023

- December 2022

- November 2022

- October 2022

- September 2022

- August 2022

- July 2022

- June 2022

- May 2022

- April 2022

- March 2022

- February 2022

- January 2022

- December 2021

- November 2021

- October 2021

- September 2021

- August 2021

- July 2021

- June 2021

- May 2021

- April 2021

- February 2021

- December 2020

- November 2020

- October 2020

- September 2020

- August 2020

- July 2020

- June 2020

- May 2020

- April 2020

- March 2020

- February 2020

- December 2019

- November 2019

- October 2019

- September 2019

- July 2019

- June 2019

- May 2019

- April 2019

- February 2019

- November 2018

- October 2018

- September 2018

- August 2018

- July 2018

- June 2018

- May 2018

- April 2018

- March 2018

- January 2018

- November 2017

- October 2017

- September 2017

- August 2017

- June 2017

- May 2017

- April 2017

- December 2016

- November 2016

- October 2016

- September 2016

- August 2016

- July 2016

- June 2016

- May 2016

- April 2016

- February 2016

- January 2016

- December 2015

- November 2015

- October 2015

- September 2015

- June 2015

- April 2015

- March 2015

- February 2015

- November 2014